Understand How to Use the attribute in BeautifulsoupīeautifulSoup: How to Find by CSS selector (. In this tutorial, we've learned two BeautifulSoup properties to get the text value of an element or element's child.įor more tutorials about BeautifulSoup, check out: To return it without newlines, we need to use stripped_strings. # HTML sourceĪs you can see, the program works as expected but with the new lines. In the following example, we'll get the value of children. This property returns the response as a generator. soup BeautifulSoup (response.text, 'html.parser') title h1 soup.h1.text items li.text for li in soup.findall ('li') Our adventurer discovered that HTTPX’s unique advantage lies in its support for HTTP/2, connection pooling, and asynchronous requests. strings property returns the text value of the element and the text value of the children of the element. After collecting the all of the requests that had a statuscode of 200, we can now apply several attempts to extract the text content from every request. string property to get the text value of elements To get all text values of children, we can use the. I try rest api, but i get json not plain text, anybody know how to get plain text conditions and actions rules in policy how it look like in GUI. string property return None when the element doesn't contain a text value, and our has children, not text value. from bs4 import BeautifulSoupĪs I said before, the. Now, let's try to get the text value of the element. Now let's find and get all elements' text values. Next, we've got the text value of the element. Find by text Syntax: string'yourtext' In the following example, we'll find thetag with child 2 in the value. In this tutorial, we'll learn how to use string to find by text and, we'll also see how to use it with regex. For example: import html2text html open ('foobar.html').read () print html2text. If it's not essential to use BeautifulSoup, you should take a look at html2text. Soup = BeautifulSoup(html_doc, 'html.parser')Īs you can see, we've used the find() method to find the first element. BeautifulSoup provides many parameters to make our search more accurate and, one of them is string. BeautifulSoup is a scraping library, so it's probably not the best choice for doing HTML rendering. If you want to scrape webpages on a large scale, you can consider more advanced techniques like Scrapy and Selenium. In the following example, we will get the text value of the element. Beautiful Soup is simple for small-scale web scraping. string property returns the text value of an element when the element contains a text value. string property to get the text value of an element This tutorial will teach us when and how to use these two properties. strings are properties that get the text value of elements. But it does not make the source of the page simpler.string and. It's not related, and that "raw" text is just a different CSS style that shows only the text up. I see many web tools support a so-called book view mode, where you can see the main article only in most cases, so I reckon it should not a problem to extract the clean plain text So my question is, how can I really obtain the clean plain text from html by Python. You need to look at the tags/classes/ids you want to keep within the body. There's still some cleaning to do (mostly because of the ads JS inside the text), but it's mostly there. When you see into raw, the result contains code like: (function() ) \n\nPlease share this article if you like it! Bless me or curse me in comments! Thank you for reading anyway!\n\n\n\n\n' Html = urllib.urlopen(url).read().decode('utf8')

You may run the following python code to see the result. The result contains so many non-plain text. However, I found it still cannot meet my requirement.

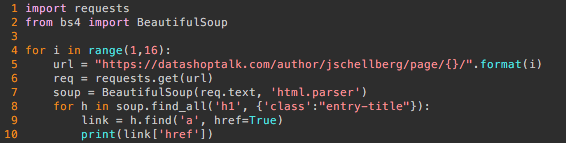

I am trying to extract the plain text given an url.Īccording to my search, the most relative tool seems to be BeautifulSoup, so I wrote a simple program to test.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed